High-Availability cluster aka Failover-cluster (active-passive cluster) is one of the most widely used cluster types in the production environment. This type of cluster provides you the continued availability of services even one of the node from the group of computer fails. If the server running application has failed for some reason (hardware failure), cluster software (pacemaker) will restart the application on another node.

Mostly in production, you can find this type of cluster is mainly used for databases, custom application and also for file sharing. Fail-over is not just starting an application; it has some series of operations associated with it; like mounting filesystems, configuring networks and starting dependent applications.

CentOS 7 / RHEL 7 supports Fail-over cluster using the pacemaker, we will be looking here about configuring the apache (web) server as a highly available application. As I said, fail-over is a series of operations, so we would need to configure filesystem and networks as a resource. For a filesystem, we would be using a shared storage from iSCSI storage.

Our Environment:

Cluster Nodes:

bir.emre.local 192.168.12.11

iki.emre.local 192.168.12.12

iSCSI Storage:

server.emre.local 192.168.12.20

All nodes are of CentOS Linux release 7.2.1511 (Core), running on VMware workstation.

Building Infrastructure:

iSCSI shared storage:

Shared storage is one of the important resources in the high-availability cluster, it holds the data of a running application. All the nodes in a cluster will have access to shared storage for recent data, SAN is the most widely used shared storage in the production environment; here, we will configure a cluster with iSCSI storage for a demonstration purpose.

Here, we will create 10GB of LVM disk on the iSCSI server to use as a shared storage for our cluster nodes. Let’s list the available disks attached to the target server using the command.

[root@server ~]# fdisk -l | grep -i sd Disk /dev/sda: 107.4 GB, 107374182400 bytes, 209715200 sectors /dev/sda1 * 2048 1026047 512000 83 Linux /dev/sda2 1026048 209715199 104344576 8e Linux LVM Disk /dev/sdb: 10.7 GB, 10737418240 bytes, 20971520 sectors

From the above output, you can see that my system has a 10GB of disk (/dev/sdb). Create an LVM with /dev/sdb (replace /dev/sdb with your disk name)

[root@server ~]# pvcreate /dev/sdb [root@server ~]# vgcreate vg_iscsi /dev/sdb [root@server ~]# lvcreate -l 100%FREE -n lv_iscsi vg_iscsi

Creating iSCSI target:

Install the targetcli package on the server.

[root@server ~]# yum install targetcli -y

Once you installed the package, enter below command to get an iSCSI CLI for an interactive prompt.

[root@server ~]# targetcli Warning: Could not load preferences file /root/.targetcli/prefs.bin. targetcli shell version 2.1.fb41 Copyright 2011-2013 by Datera, Inc and others. For help on commands, type 'help'. /> cd /backstores/block /backstores/block> create iscsi_shared_storage /dev/vg_iscsi/lv_iscsi Created block storage object iscsi_shared_storage using /dev/vg_iscsi/lv_iscsi. /backstores/block> cd /iscsi /iscsi> create iqn.2016-03.local.emre.server:cluster Created target iqn.2016-03.local.emre.server:cluster. Created TPG 1. Global pref auto_add_default_portal=true Created default portal listening on all IPs (0.0.0.0), port 3260. /iscsi> cd /iscsi/iqn.2016-03.local.emre.server:cluster/tpg1/acls /iscsi/iqn.20...ter/tpg1/acls> create iqn.2016-03.local.emre.server:biriki Created Node ACL for iqn.2016-03.local.emre.server:node1node2 /iscsi/iqn.20...ter/tpg1/acls> cd /iscsi/iqn.2016-03.local.emre.server:cluster/tpg1/ /iscsi/iqn.20...:cluster/tpg1> set attribute authentication=0 Parameter authentication is now '0'. /iscsi/iqn.20...:cluster/tpg1> set attribute generate_node_acls=1 Parameter generate_node_acls is now '1'. /iscsi/iqn.20...:cluster/tpg1> cd /iscsi/iqn.2016-03.local.emre.server:cluster/tpg1/luns /iscsi/iqn.20...ter/tpg1/luns> create /backstores/block/iscsi_shared_storage Created LUN 0. Created LUN 0->0 mapping in node ACL iqn.2016-03.local.emre.server:biriki /iscsi/iqn.20...ter/tpg1/luns> cd / /> ls o- / ......................................................................................................................... [...] o- backstores .............................................................................................................. [...] | o- block .................................................................................................. [Storage Objects: 1] | | o- iscsi_shared_storage .............................................. [/dev/vg_iscsi/lv_iscsi (10.0GiB) write-thru activated] | o- fileio ................................................................................................. [Storage Objects: 0] | o- pscsi .................................................................................................. [Storage Objects: 0] | o- ramdisk ................................................................................................ [Storage Objects: 0] o- iscsi ............................................................................................................ [Targets: 1] | o- iqn.2016-03.local.emre.server:cluster .......................................................................... [TPGs: 1] | o- tpg1 .................................................................................................. [gen-acls, no-auth] | o- acls .......................................................................................................... [ACLs: 1] | | o- iqn.2016-03.local.emre.server:node1node2 .......................................................... [Mapped LUNs: 1] | | o- mapped_lun0 .................................................................. [lun0 block/iscsi_shared_storage (rw)] | o- luns .......................................................................................................... [LUNs: 1] | | o- lun0 ............................................................ [block/iscsi_shared_storage (/dev/vg_iscsi/lv_iscsi)] | o- portals .................................................................................................... [Portals: 1] | o- 0.0.0.0:3260 ..................................................................................................... [OK] o- loopback ......................................................................................................... [Targets: 0] /> saveconfig Last 10 configs saved in /etc/target/backup. Configuration saved to /etc/target/saveconfig.json /> exit Global pref auto_save_on_exit=true Last 10 configs saved in /etc/target/backup. Configuration saved to /etc/target/saveconfig.json

Enable and restart the target service.

[root@server ~]# systemctl enable target.service [root@server ~]# systemctl restart target.service

Configure the firewall to allow iSCSI traffic.

[root@server ~]# firewall-cmd --permanent --add-port=3260/tcp [root@server ~]# firewall-cmd --reload

Configure Initiator:

It’s the time to configure cluster nodes to make a use of iSCSI storage, perform below steps on all of your cluster nodes.

# yum install iscsi-initiator-utils -y

Discover the target using below command.

# iscsiadm -m discovery -t st -p 192.168.12.20 192.168.12.20:3260,1 iqn.2016-03.local.emre.server:cluster

Edit below file and add iscsi initiator name.

# vi /etc/iscsi/initiatorname.iscsi InitiatorName=iqn.2016-03.local.emre.server:biriki

Login to the target.

# iscsiadm -m node -T iqn.2016-03.local.emre.server:cluster -p 192.168.12.20 -l Logging in to [iface: default, target: iqn.2016-03.local.emre.server:cluster, portal: 192.168.12.20,3260] (multiple) Login to [iface: default, target: iqn.2016-03.local.emre.server:cluster, portal: 192.168.12.20,3260] successful.

Restart and enable the initiator service.

# systemctl restart iscsid.service # systemctl enable iscsid.service

Setup Cluster Nodes:

Go to all of your nodes and check whether the new disk is visible or not. In my case, /dev/sdb is the new disk.

# fdisk -l | grep -i sd Disk /dev/sda: 107.4 GB, 107374182400 bytes, 209715200 sectors /dev/sda1 * 2048 1026047 512000 83 Linux /dev/sda2 1026048 209715199 104344576 8e Linux LVM Disk /dev/sdb: 10.7 GB, 10733223936 bytes, 20963328 sectors

On any one of your node (Ex, bir), create an LVM using below commands

[root@bir ~]# pvcreate /dev/sdb [root@bir ~]# vgcreate vg_apache /dev/sdb [root@bir ~]# lvcreate -n lv_apache -l 100%FREE vg_apache [root@bir ~]# mkfs.ext4 /dev/vg_apache/lv_apache

Now, go to your remaining nodes and run below commands

[root@iki ~]# pvscan [root@iki ~]# vgscan [root@iki ~]# lvscan

Finally, verify the LV we created on bir is available to you on all your remaining nodes (Ex. iki) using below command. You should see /dev/vg_apache/lv_apache on all your nodes.

[root@iki ~]# /dev/vg_apache/lv_apache

Output:

--- Logical volume --- LV Path /dev/vg_apache/lv_apache LV Name lv_apache VG Name vg_apache LV UUID UDux3r-VKXO-pjgX-J2mE-M0U0-EO4r-qP2ziq LV Write Access read/write LV Creation host, time node2, 2017-08-24 15:09:00 +0000 LV Status available # open 0 LV Size 10.00 GiB Current LE 2559 Segments 1 Allocation inherit Read ahead sectors auto - currently set to 8192 Block device 253:0

Make a host entry on each node for all nodes, the cluster will be using the host name to communicate each other. Perform tasks on all of your cluster nodes.

# vi /etc/hosts

192.168.12.11 bir.emre.local bir 192.168.12.12 iki.emre.local iki

Install cluster packages (pacemaker) on all nodes using below command.

# yum install pcs fence-agents-all -y

Allow all high availability application on the firewall to have a proper communication between nodes, you can skip this step if the system doesn’t have firewalld installed.

# firewall-cmd --permanent --add-service=high-availability # firewall-cmd --add-service=high-availability

Use below command to list down the allowed applications in the firewall.

# firewall-cmd --list-service dhcpv6-client high-availability ssh

Set password for hacluster user, this is cluster administration account. We suggest you set the same password for all nodes.

# passwd hacluster

Start the cluster service, also, enable it to start automatically on system startup.

# systemctl start pcsd.service # systemctl enable pcsd.service

Remember to run the above commands on all of your cluster nodes.

Cluster Creation:

Authorize the nodes using below command, run the command in any one of the node.

[root@bir ~]# pcs cluster auth bir.emre.local iki.emre.local Username: hacluster Password: bir.emre.local: Authorized iki.emre.local: Authorized

Create a cluster.

[root@bir ~]# pcs cluster setup --start --name emre_cluster bir.emre.local iki.emre.local Shutting down pacemaker/corosync services... Redirecting to /bin/systemctl stop pacemaker.service Redirecting to /bin/systemctl stop corosync.service Killing any remaining services... Removing all cluster configuration files... bir.emre.local: Succeeded iki.emre.local: Succeeded Starting cluster on nodes: bir.emre.local, iki.emre.local... bir.emre.local: Starting Cluster... iki.emre.local: Starting Cluster... Synchronizing pcsd certificates on nodes bir.emre.local, iki.emre.local... bir.emre.local: Success iki.emre.local: Success Restaring pcsd on the nodes in order to reload the certificates... bir.emre.local: Success iki.emre.local: Success

Enable the cluster to start at the system startup, else you will need to start the cluster on every time you restart the system.

[root@bir ~]# pcs cluster enable --all bir.emre.local: Cluster Enabled iki.emre.local: Cluster Enabled

Use below command to get the status of the cluster.

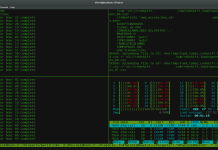

[root@bir ~]# pcs cluster status Cluster Status: Last updated: Fri Mar 25 11:18:52 2016 Last change: Fri Mar 25 11:16:44 2016 by hacluster via crmd on bir.emre.local Stack: corosync Current DC: bir.emre.local (version 1.1.13-10.el7_2.2-44eb2dd) - partition with quorum 2 nodes and 0 resources configured Online: [ bir.emre.local iki.emre.local ] PCSD Status: bir.emre.local: Online iki.emre.local: Online

Run the below command to get a detailed information about the cluster including its resources, pacemaker status, and nodes details.

[root@bir ~]# pcs status Cluster name: emre_cluster WARNING: no stonith devices and stonith-enabled is not false Last updated: Fri Mar 25 11:19:25 2016 Last change: Fri Mar 25 11:16:44 2016 by hacluster via crmd on bir.emre.local Stack: corosync Current DC: bir.emre.local (version 1.1.13-10.el7_2.2-44eb2dd) - partition with quorum 2 nodes and 0 resources configured Online: [ bir.emre.local iki.emre.local ] Full list of resources: PCSD Status: bir.emre.local: Online iki.emre.local: Online Daemon Status: corosync: active/enabled pacemaker: active/enabled pcsd: active/enabled

Fencing Devices:

The fencing device is a hardware / software device which helps to disconnect the problem node by resetting node / disconnecting shared storage from accessing it. My demo cluster is running on top of VMware Virtual machine, so I am not showing you a fencing device setup, but you can follow this guide to setup a fencing device.

Preparing resources:

Apache Web Server:

Install apache server on both nodes.

# yum install -y httpd wget

Edit the configuration file.

# vi /etc/httpd/conf/httpd.conf

Add below content at the end of file on all your cluster nodes.

<Location /server-status> SetHandler server-status Order deny,allow Deny from all Allow from 127.0.0.1 </Location>

Now we need to use shared storage for storing the web content (HTML) file. Perform below operation in any one of the nodes.

[root@iki ~]# mount /dev/vg_apache/lv_apache /var/www/ [root@iki ~]# mkdir /var/www/html [root@iki ~]# mkdir /var/www/cgi-bin [root@iki ~]# mkdir /var/www/error [root@iki ~]# restorecon -R /var/www [root@iki ~]# cat <<-END >/var/www/html/index.html <html> <body>Hello This Is Coming From Emre Cluster</body> </html> END [root@iki ~]# umount /var/www

Allow Apache service in the firewall on all nodes.

[root@iki ~]# firewall-cmd --add-service=http [root@iki ~]# firewall-cmd --permanent --add-service=http

Creating Resources:

Create a filesystem resource for Apache server, this is nothing but a shared storage coming from the iSCSI server.

# pcs resource create httpd_fs Filesystem device="/dev/mapper/vg_apache-lv_apache" directory="/var/www" fstype="ext4" --group apache

Create an IP address resource, this will act a virtual IP for the Apache. Clients will use this ip for accessing the web content instead of individual nodes ip.

# pcs resource create httpd_vip IPaddr2 ip=192.168.12.100 cidr_netmask=24 nic=eth0 --group apache

Create an Apache resource which will monitor the status of Apache server and move the resource to another node in case of any failure.

# pcs resource create httpd_ser apache configfile="/etc/httpd/conf/httpd.conf" statusurl="http://127.0.0.1/server-status" --group apache

Since we are not using fencing, disable it (STONITH). You must disable to start the cluster resources, but disabling STONITH in the production environment is not recommended.

# pcs property set stonith-enabled=false

Check the status of the cluster.

[root@bir ~]# pcs status Cluster name: emre_cluster Last updated: Fri Mar 25 13:47:55 2016 Last change: Fri Mar 25 13:31:58 2016 by root via cibadmin on bir.emre.local Stack: corosync Current DC: iki.emre.local (version 1.1.13-10.el7_2.2-44eb2dd) - partition with quorum 2 nodes and 3 resources configured Online: [ bir.emre.local iki.emre.local ] Full list of resources: Resource Group: apache httpd_vip (ocf::heartbeat:IPaddr2): Started bir.emre.local httpd_ser (ocf::heartbeat:apache): Started bir.emre.local httpd_fs (ocf::heartbeat:Filesystem): Started bir.emre.local PCSD Status: bir.emre.local: Online iki.emre.local: Online Daemon Status: corosync: active/enabled pacemaker: active/enabled pcsd: active/enabled

Once the cluster is up and running, point a web browser to the Apache virtual-ip, you should get a web page like below.

Let’s check the fail over of resource of the node by stopping the cluster on the active node.

[root@bir ~]# pcs cluster stop bir.emre.local

Important cluster commands:

# pcs resource move apache bir.emre.local # pcs resource stop apache iki.emre.local # pcs resource disable apache iki.emre.local # pcs resource enable apache iki.emre.local # pcs resource restart apache # pcs resource delete resourcename

Thanks everyone for reading, please let us know your thoughts in comment section.